FLOPS与FLOPs

FLOPS:注意全大写,是floating point operations per second的缩写,意指每秒浮点运算次数,理解为计算速度。是一个衡量硬件性能的指标。

FLOPs:注意s小写,是floating point operations的缩写(s表复数),意指浮点运算数,理解为计算量。可以用来衡量算法/模型的复杂度。

全连接网络中FLOPs的计算

推导

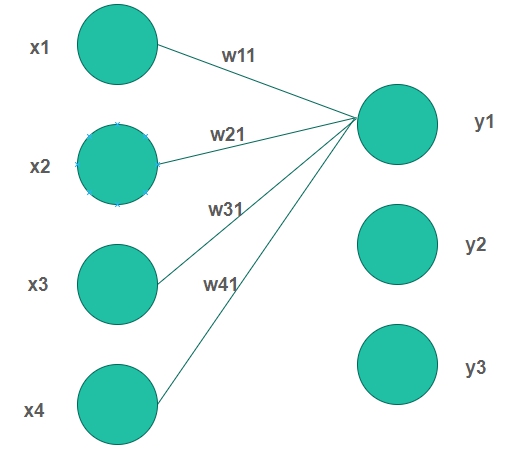

以4个输入神经元和3个输出神经元为例

计算一个输出神经元的的计算过程为

$$ y1 = w_{11}*x_1+w_{21}*x_2+w_{31}*x_3+w_{41}*x_4 $$

所需的计算次数为

- 4次乘法

- 3次加法

共需4+3=7计算。推广到I个输入神经元O个输出神经元后则计算一个输出神经元所需要的计算次数为$I+(I-1)=2I-1$,则总的计算次数为

$$ FLOPs = (2I-1)*O $$

考虑bias则为

$$ y1 = w_{11}*x_1+w_{21}*x_2+w_{31}*x_3+w_{41}*x_4+b1 $$

总的计算次数为

$$ FLOPs = 2I*O $$

结果

FC(full connected)层FLOPs的计算公式如下(不考虑bias时有-1,有bias时没有-1):

$$ FLOPs = (2 \times I - 1) \times O $$

其中:

- I = input neuron numbers(输入神经元的数量)

- O = output neuron numbers(输出神经元的数量)

CNN中FLOPs的计算

以下答案不考虑activation function的运算

推导

对于输入通道数为$C_{in}$,卷积核的大小为K,输出通道数为$C_{out}$,输出特征图的尺寸为$H*W$

进行一次卷积运算的计算次数为

- 乘法$C_{in}K^2$次

- 加法$C_{in}K^2-1$次

- 共计$C_{in}K^2+C_{in}K^2-1=2C_{in}K^2-1$次,若考虑bias则再加1次

- 得到一个channel的特征图所需的卷积次数为$H*W$次

- 共计需得到$C_{out}$个特征图

因此对于CNN中的一个卷积层来说总的计算次数为(不考虑bias时有-1,考虑bias时没有-1):

$$ FLOPs = (2C_{in}K^2-1)HWC_{out} $$

结果

卷积层FLOPs的计算公式如下(不考虑bias时有-1,有bias时没有-1):

$$ FLOPs = (2C_{in}K^2-1)HWC_{out} $$

其中:

- $C_{in}$ = input channel

- K= kernel size

- H,W = output feature map size

- $C_{out}$ = output channel

计算FLOPs的代码或包

- torchstat

from torchstat import stat

import torchvision.models as models

model = models.vgg16()

stat(model, (3, 224, 224)) module name input shape output shape params memory(MB) MAdd Flops MemRead(B) MemWrite(B) duration[%] MemR+W(B)

0 features.0 3 224 224 64 224 224 1792.0 12.25 173,408,256.0 89,915,392.0 609280.0 12845056.0 3.67% 13454336.0

1 features.1 64 224 224 64 224 224 0.0 12.25 3,211,264.0 3,211,264.0 12845056.0 12845056.0 1.83% 25690112.0

2 features.2 64 224 224 64 224 224 36928.0 12.25 3,699,376,128.0 1,852,899,328.0 12992768.0 12845056.0 8.43% 25837824.0

3 features.3 64 224 224 64 224 224 0.0 12.25 3,211,264.0 3,211,264.0 12845056.0 12845056.0 1.45% 25690112.0

4 features.4 64 224 224 64 112 112 0.0 3.06 2,408,448.0 3,211,264.0 12845056.0 3211264.0 11.37% 16056320.0

5 features.5 64 112 112 128 112 112 73856.0 6.12 1,849,688,064.0 926,449,664.0 3506688.0 6422528.0 4.03% 9929216.0

6 features.6 128 112 112 128 112 112 0.0 6.12 1,605,632.0 1,605,632.0 6422528.0 6422528.0 0.73% 12845056.0

7 features.7 128 112 112 128 112 112 147584.0 6.12 3,699,376,128.0 1,851,293,696.0 7012864.0 6422528.0 5.86% 13435392.0

8 features.8 128 112 112 128 112 112 0.0 6.12 1,605,632.0 1,605,632.0 6422528.0 6422528.0 0.37% 12845056.0

9 features.9 128 112 112 128 56 56 0.0 1.53 1,204,224.0 1,605,632.0 6422528.0 1605632.0 7.32% 8028160.0

10 features.10 128 56 56 256 56 56 295168.0 3.06 1,849,688,064.0 925,646,848.0 2786304.0 3211264.0 3.30% 5997568.0

11 features.11 256 56 56 256 56 56 0.0 3.06 802,816.0 802,816.0 3211264.0 3211264.0 0.00% 6422528.0

12 features.12 256 56 56 256 56 56 590080.0 3.06 3,699,376,128.0 1,850,490,880.0 5571584.0 3211264.0 5.13% 8782848.0

13 features.13 256 56 56 256 56 56 0.0 3.06 802,816.0 802,816.0 3211264.0 3211264.0 0.37% 6422528.0

14 features.14 256 56 56 256 56 56 590080.0 3.06 3,699,376,128.0 1,850,490,880.0 5571584.0 3211264.0 4.76% 8782848.0

15 features.15 256 56 56 256 56 56 0.0 3.06 802,816.0 802,816.0 3211264.0 3211264.0 0.37% 6422528.0

16 features.16 256 56 56 256 28 28 0.0 0.77 602,112.0 802,816.0 3211264.0 802816.0 2.56% 4014080.0

17 features.17 256 28 28 512 28 28 1180160.0 1.53 1,849,688,064.0 925,245,440.0 5523456.0 1605632.0 3.66% 7129088.0

18 features.18 512 28 28 512 28 28 0.0 1.53 401,408.0 401,408.0 1605632.0 1605632.0 0.00% 3211264.0

19 features.19 512 28 28 512 28 28 2359808.0 1.53 3,699,376,128.0 1,850,089,472.0 11044864.0 1605632.0 5.50% 12650496.0

20 features.20 512 28 28 512 28 28 0.0 1.53 401,408.0 401,408.0 1605632.0 1605632.0 0.00% 3211264.0

21 features.21 512 28 28 512 28 28 2359808.0 1.53 3,699,376,128.0 1,850,089,472.0 11044864.0 1605632.0 5.49% 12650496.0

22 features.22 512 28 28 512 28 28 0.0 1.53 401,408.0 401,408.0 1605632.0 1605632.0 0.00% 3211264.0

23 features.23 512 28 28 512 14 14 0.0 0.38 301,056.0 401,408.0 1605632.0 401408.0 1.10% 2007040.0

24 features.24 512 14 14 512 14 14 2359808.0 0.38 924,844,032.0 462,522,368.0 9840640.0 401408.0 2.94% 10242048.0

25 features.25 512 14 14 512 14 14 0.0 0.38 100,352.0 100,352.0 401408.0 401408.0 0.00% 802816.0

26 features.26 512 14 14 512 14 14 2359808.0 0.38 924,844,032.0 462,522,368.0 9840640.0 401408.0 2.57% 10242048.0

27 features.27 512 14 14 512 14 14 0.0 0.38 100,352.0 100,352.0 401408.0 401408.0 0.00% 802816.0

28 features.28 512 14 14 512 14 14 2359808.0 0.38 924,844,032.0 462,522,368.0 9840640.0 401408.0 2.19% 10242048.0

29 features.29 512 14 14 512 14 14 0.0 0.38 100,352.0 100,352.0 401408.0 401408.0 0.37% 802816.0

30 features.30 512 14 14 512 7 7 0.0 0.10 75,264.0 100,352.0 401408.0 100352.0 0.37% 501760.0

31 avgpool 512 7 7 512 7 7 0.0 0.10 0.0 0.0 0.0 0.0 0.00% 0.0

32 classifier.0 25088 4096 102764544.0 0.02 205,516,800.0 102,760,448.0 411158528.0 16384.0 10.62% 411174912.0

33 classifier.1 4096 4096 0.0 0.02 4,096.0 4,096.0 16384.0 16384.0 0.00% 32768.0

34 classifier.2 4096 4096 0.0 0.02 0.0 0.0 0.0 0.0 0.37% 0.0

35 classifier.3 4096 4096 16781312.0 0.02 33,550,336.0 16,777,216.0 67141632.0 16384.0 2.20% 67158016.0

36 classifier.4 4096 4096 0.0 0.02 4,096.0 4,096.0 16384.0 16384.0 0.00% 32768.0

37 classifier.5 4096 4096 0.0 0.02 0.0 0.0 0.0 0.0 0.37% 0.0

38 classifier.6 4096 1000 4097000.0 0.00 8,191,000.0 4,096,000.0 16404384.0 4000.0 0.73% 16408384.0

total 138357544.0 109.39 30,958,666,264.0 15,503,489,024.0 16404384.0 4000.0 100.00% 783170624.0

============================================================================================================================================================

Total params: 138,357,544

------------------------------------------------------------------------------------------------------------------------------------------------------------

Total memory: 109.39MB

Total MAdd: 30.96GMAdd

Total Flops: 15.5GFlops

Total MemR+W: 746.89MB

参考资料

- CNN 模型所需的计算力(flops)和参数(parameters)数量是怎么计算的?

- 分享一个FLOPs计算神器

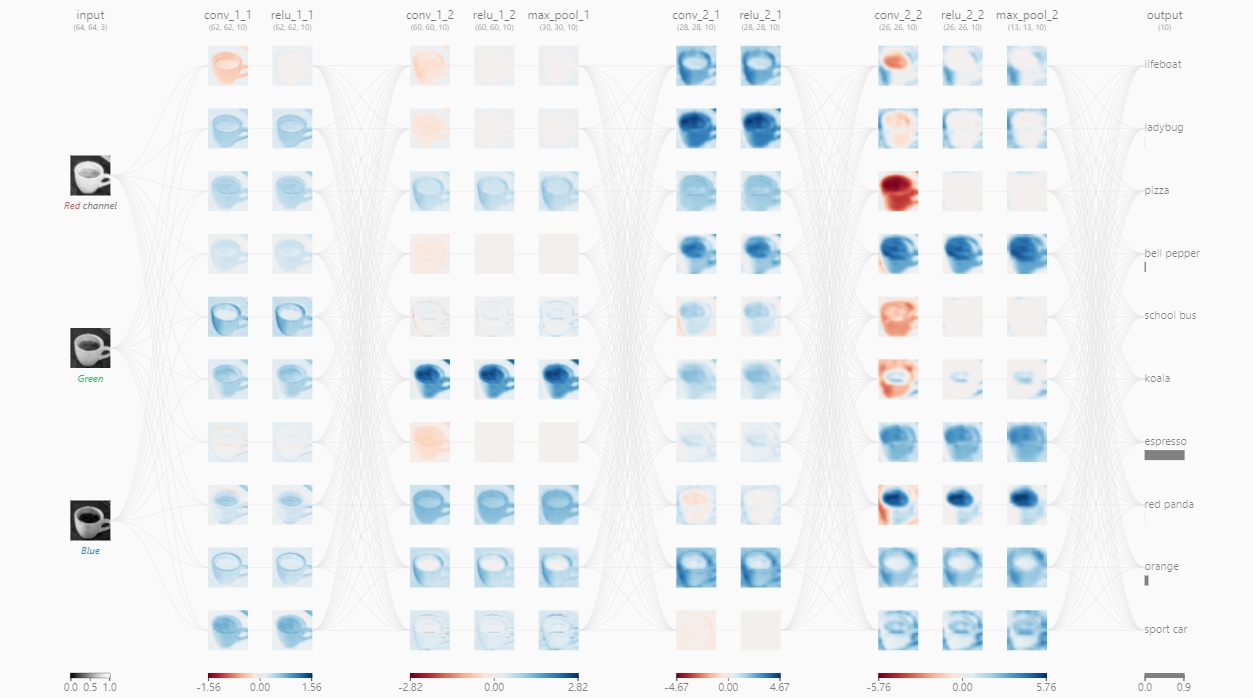

- CNN Explainer

- [Molchanov P , Tyree S , Karras T , et al. Pruning Convolutional Neural Networks for Resource Efficient Transfer Learning[J]. 2016.](https://arxiv.org/pdf/1611.06440.pdf)

评论 (0)